Curve CryptoPool with Donations

Introduction

In that article, we will dive into the idea and implementation of Cryptopool with Donations.

Disclaimer: We will look into the implementation of donations at the TwoCrypto pool. Commit - 387fbe5f12473ef0e1f0c6a76bc38f1ca0da669d.

Also, we assume that you are already familiar with concepts of StableSwap and CryptoSwap. For readers who wish to learn or revisit the formula derivations, see CurveV1 and CurveV2.

Now, let’s go to the origins.

Stableswap

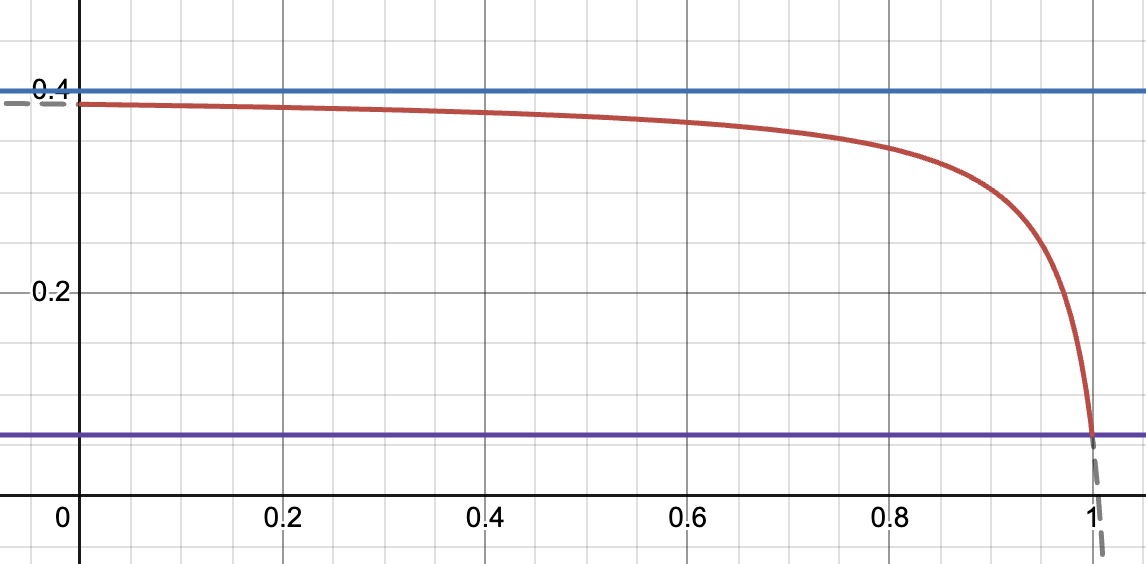

Stableswap was the first version of the pools that merged Constant Product and Constant Sum curves in one AMM, enabling more liquidity and less slippage for 1:1 pegged assets.

Stableswap formula:

- balance of token deposited.

- number of assets in pool.

- total balance of the pool when all the coins’ prices are equal at $1.

Note that and only if all balances are equal.

- parameter that is defined by the protocol governance.

- is a weight that controls the balance between the constant-sum and constant-product formulas. As the pool imbalance grows, decreases, transitioning to the constant product formula. In the case of a fully balanced pool . By controlling we can change “area” of the constant sum curve used.

Choosing : what you gain and what you pay

A larger stretches and flattens the near-peg region. Quotes stay closer to 1:1 over a wider range of inventories, slippage for traders is lower, and the pool can clear more flow before leaving the flat zone. In calm markets, this improves capital efficiency for pegged pairs.

Misconfiguring can harm the pool, so it must be chosen carefully. When the risk of depeg is higher, a smaller should be used to narrow the flat region and make the curve react faster. While the curve remains flat, the internal quote can lag external markets by more before the slope reacts. First, if one asset depegs, traders will dump the depegged token into the pool at near-peg prices for some time while the curve still treats it as equal, leaving LPs holding the toxic asset. Second, new LPs or arbitrageurs can buy the underpriced asset outside and enter at the pool’s near-peg price, which dilutes the share of existing LPs. Once the market pushes past the edge of the flat region, the curve becomes steep and losses grow faster, so shocks are felt more abruptly.

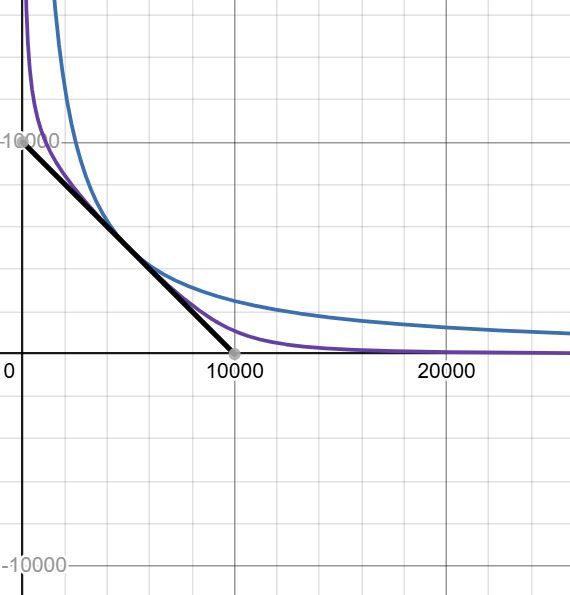

Below is an interactive Desmos graph that lets you vary and see how the flat area expands and where the “wall” begins.

Black - Constant Sum

Blue - Constant Product

Purple - Stableswap

Repeg mechanism

StableSwap was originally designed to support only 1:1 pegged assets. However, TwoCrypto combines the mathematical simplicity of StableSwap with support for non-pegged assets by utilizing the repeg mechanism initially described in CryptoSwap.

What is a repeg?

A repeg is the process of adjusting the pool's internal price anchor to better align with current market conditions. TwoCrypto has a flat region that provides more favorable trading conditions for users. However, due to the volatile nature of crypto assets, prices are constantly moving, and this flat region must shift accordingly to preserve these properties. A repeg event acts as a passive rebalancing mechanism, adjusting the pool's internal price anchor toward the current market price to restore capital efficiency and keep the pool competitive.

But why should we do repeg?

Even with well-chosen parameters for the curve, we still face issues if the market price drifts from the peg price:

- Lower liquidity: Liquidity becomes less effective as the pool operates outside its optimal range.

- Higher slippage: Trades experience worse execution as the curve steepens away from the peg.

- Reduced volume: Higher slippage discourages traders, leading to fewer transactions.

- Lower fee income: Reduced volume means fewer fees collected, creating a vicious cycle where the pool cannot afford future repegs.

Why not do repeg every block?

Every repeg creates rebalance loss for the pool. Think of it as shifting liquidity between ticks in Uniswap v3. Change the peg too often, and the realized loss accumulates. Change it too rarely, and slippage increases because liquidity outside the flat region is thin (often thinner than in Uniswap v2), making the pool less efficient even if fees per trade are higher. In short, the protocol finances repeg-induced rebalance losses with fees collected from user interactions (LP profit).

The repeg mechanism uses accumulated fees to cover the realized rebalance loss that occurs when the peg is moved. After a repeg, the pool recomputes balances at the new peg and updates the virtual price.

Definitions

In that part, we will describe the repeg mechanism without donations in detail. But first, let’s start with some definitions.

We need a way to measure the pool's value that accounts for StableSwap unique form of impermanent loss.

We define as the value of the constant-product invariant at the equilibrium point. will be used as a quantification of the total pool liquidity.

— an array of token prices in terms of token 0. By definition, . In this text, we use as .

- array of balances scaled to token0 for each token.

- new virtual price - old virtual price

— a variable that tracks the value of accrued fees for liquidity providers. To learn why there is a difference between whitepaper and code, see xcp_profit explanation.

More details on xcp_profit explanation in the Appendix.

Let’s continue exploring definitions. There are several price variables used in the TwoCrypto, all relative to the first asset:

- price_oracle ( - exponential moving average (EMA);

- last_prices ( - current spot price;

- price_scale ( - current liquidity concentration price.

EMA Calculation:

where .

In paper , in code , so .

Repeg mechanism — short walkthrough

A repeg is a two-pass routine that runs after any swap or imbalanced liquidity change. First, the pool measures the market move:

- updates the last trade price

- refreshes the EMA

- recomputes the invariant at the current peg

From the updated and it derives

then books fees additively into the accounting variable

With fees recognized, the contract tests whether there is enough “budget” to move the peg. In the normalized units used on-chain the gate is equivalent to

If this inequality fails (we want to use only half of profits to repeg), the call ends with no peg change.

If the gate passes, the pool proposes a small step of the peg in the direction of the EMA (a bounded move controlled by the adjustment step and the distance between and ). It rescales the value balances , resolves , and computes the candidate LP value

The gate is checked again with ; only if it still holds does the contract commit the repeg by setting , , and . Importantly, is not updated on this second pass, so fee accrual remains tied to trade fees rather than to peg motion.

Dynamic fees

What about fees?

Liquidity providers earn through fees taken on swaps, deposits, and imbalanced withdrawals, typically a small percentage of volume(e.g, 0.1% or 0.05%), and that revenue must offset rebalance loss that grows as prices move. In regular AMMs without a peg or flat region, IL accumulates across the full price range. TwoCrypto is different: it maintains a flat, high-liquidity region around a peg where IL is minimized. LPs experience losses primarily due to pool imbalance, when the pool composition shifts away from equilibrium as traders arbitrage price movements. Higher fees during such imbalances help compensate LPs by generating additional revenue that offsets these losses.

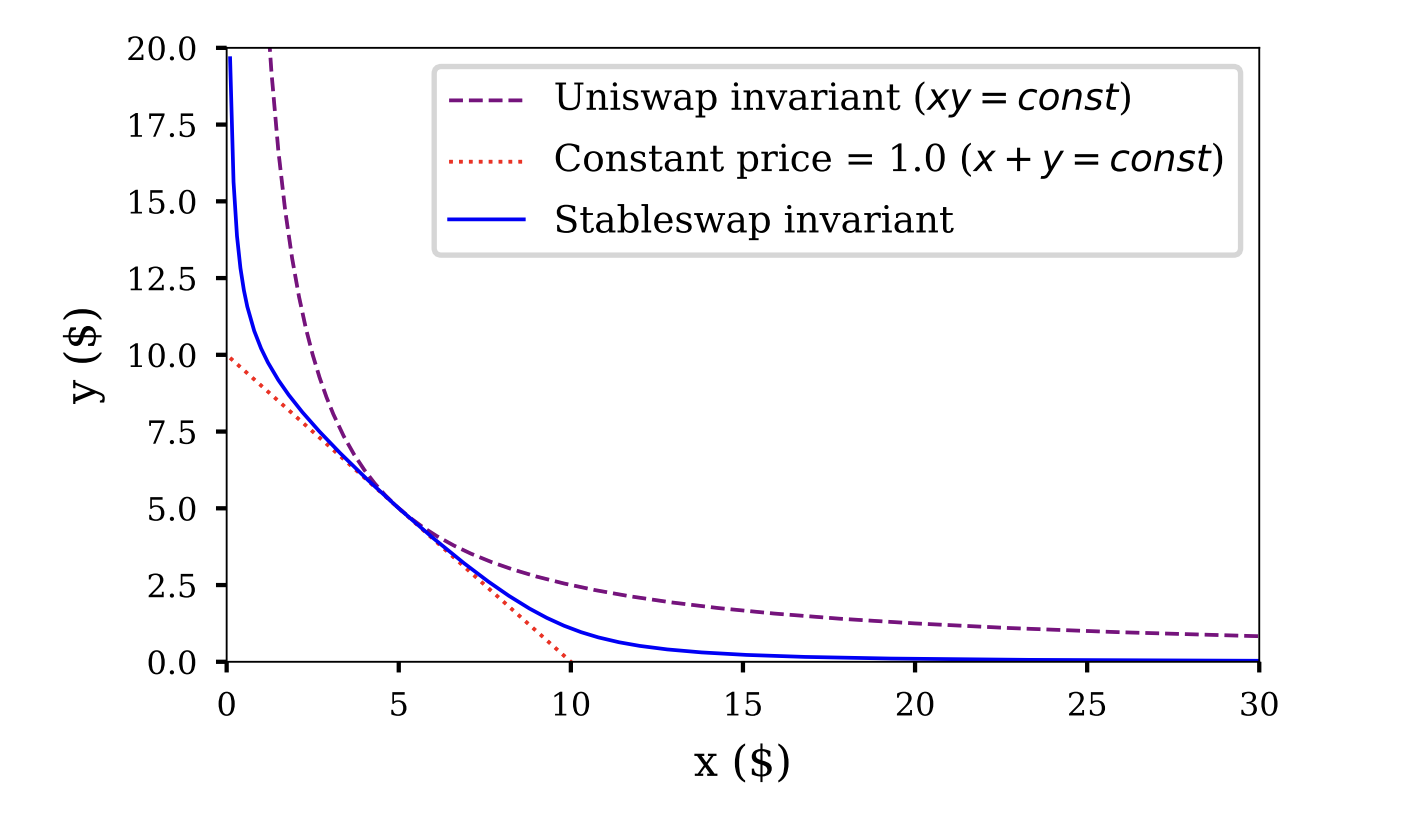

The more imbalanced the pool, the more fees we pay for exchanges or unbalanced deposits/withdrawals.

Blue -

Purple -

Red -

The graph shows the relationship between fee (y-axis) and the term (x-axis), which decreases as the pool moves further from equilibrium. The and determine the minimum and maximum fees, respectively, while controls how steeply the fee increases.

Refuel of TwoCrypto

Repeg Stuck

What does a repeg cost?

As mentioned earlier, most trades in a crypto pool should occur in the constant-sum region by design. This is where the main benefits emerge: deeper liquidity, lower slippage, and lower IL for liquidity providers.

But like in every mechanism, we may find ourselves in a situation where the pool doesn’t have enough fuel, and there are no gas stations around. The spot price drifts far from the current peg, trading activity declines, fee income falls, and the pool cannot afford to repeg. That's when you need to call a friend for a fuel can.

Donations

A donation is liquidity supply minted into the pool through add_liquidity() that can be used only for a repeg and is burned when spent. No one receives those LP tokens. The pool enforces a cap through donation_shares_max_ratio so that reliance on donations remains bounded. Note that these LP tokens are fully backed by external liquidity. They are not minted from nowhere.

Two time-based controls determine how much of the donated buffer is actually usable at any moment.

First, donations unlock linearly over donation_duration so the available amount grows smoothly rather than appearing all at once (see “donation release derivation” section).

Second, a protection factor temporarily reduces what is available and then decays to zero by donation_protection_expiry_ts. Protection is extended by genuine (non-donation) adds to liquidity to discourage opportunistic draining (see “donation lock derivation” section).

Linear release and time-decaying protection together serve as MEV guards: the first prevents one-block extraction of the entire donation, the second deters simple sandwiches that try to burn all available donations immediately. When the initial repeg condition passes, and the profit from fees does not fully cover the repeg cost, the contract calculates how many shares it should burn to meet constraint, burns the needed (if available) donation shares and then updates the linear-release anchor so the invariant continues to hold.

Let’s take a closer look to the functions implementation:

_donation_shares()

This function calculates the available shares for repeg that are not under protection.

_donation_protection - a boolean parameter for the function. If true, protection is enabled, and we apply protection_factor.

def _donation_shares(_donation_protection: bool = True) -> uint256:

self.donation_shares - the amount of shares added to the pool that have not yet been burned. In formulas, we'll call this .

First, recalculate the impact of time-based release.

self.last_donation_release_ts - the captured timestamp when the last release occurred.

donation_duration - the time required for donations to fully release from the locked state. Set by the admin. By default (initializer) = 7 days.

donation_shares: uint256 = self.donation_shares

if donation_shares == 0:

return 0

# --- Time-based release of donation shares ---

elapsed: uint256 = block.timestamp - self.last_donation_release_ts

unlocked_shares: uint256 = min(donation_shares, donation_shares * elapsed // self.donation_duration)

If _donation_protection is False, then we can return unlocked shares without applying protection_factor. We will explore usage later.

if not _donation_protection:

# if donation protection is disabled, return the total amount of unlocked donation shares

# this is needed to calculate new timestamp for overlapping donations in add_liquidity

# otherwise must always be called with donation_protection=True

return unlocked_shares

Next, recalculate the protection factor.

It's based on two variables:

self.donation_protection_expiry_ts— initially 0. Updates after adding liquidity if donation shares already exist. We will name it .self.donation_protection_period— initially 10 minutes. Set by admin in theset_donation_protection_params()function.

We check whether protection is still active. If yes, we linearly release the shares. The protection factor slowly decreases over time if there are no liquidity additions (we'll see this later). This means represents exactly the "protected shares."

# --- Donation protection damping factor ---

protection_factor: uint256 = 0

expiry: uint256 = self.donation_protection_expiry_ts

if expiry > block.timestamp:

protection_factor = min((expiry - block.timestamp) * PRECISION // self.donation_protection_period, PRECISION)

return unlocked_shares * (PRECISION - protection_factor) // PRECISION

add_liquidity()

add_liquidity() is a function called to provide liquidity to the pool. After introducing donations, one more parameter was added to the function: donation: bool = False.

def add_liquidity(

amounts: uint256[N_COINS],

min_mint_amount: uint256,

receiver: address = msg.sender,

donation: bool = False

) -> uint256:

We will explore only the new parts related to donations.

d_token is the amount of LP shares that will be added to the pool through this function. We’ll call it token.

The pool receives donations only through this function. Let's see how a donation is processed.

Despite all the sanity checks, we want to ensure that new donations don't push the pool above self.donation_shares_max_ratio, the limit for donation share per pool. Initially set to 10%, this can be changed by the admin in set_donation_protection_params().

So we account for the previous and the new .

#At that point we already calculate d_token from received amounts and other state variables

...

d_token_fee = (

self._calc_token_fee(amounts_received, xp, donation, True) * d_token // 10**10 + 1

) # for donations - we only take NOISE_FEE (check _calc_token_fee)

d_token -= d_token_fee

if donation:

assert receiver == empty(address), "nonzero receiver"

new_donation_shares: uint256 = self.donation_shares + d_token

assert new_donation_shares * PRECISION // (token_supply + d_token) <= self.donation_shares_max_ratio, "donation above cap!"

Next, we recalculate the elapsed time. Why? We want to treat all new donated shares and previous donations as one combined donation. The key invariant here is constant , which provides a smooth linear realization.

This combined donation has a deployment time between the previous timestamp and the current block.timestamp, so we update self.last_donation_release_ts. The formula derivation is shown in the code comments.

This operation prevents a "spike" in the linear-release graph while increasing the release rate.

Note: If there were no prior donations, then last_donation_release_ts = block.timestamp, which initializes the start of the release process.

# When adding donation, if the previous one hasn't been fully released we preserve

# the currently unlocked donation [given by `self._donation_shares()`] by updating

# `self.last_donation_release_ts` as if a single virtual donation of size `new_donation_shares`

# was made in past and linearly unlocked reaching `self._donation_shares()` at the current time.

# We want the following equality to hold:

# self._donation_shares() = new_donation_shares * (new_elapsed / self.donation_duration)

# We can rearrange this to find the new elapsed time (imitating one large virtual donation):

# => new_elapsed = self._donation_shares() * self.donation_duration / new_donation_shares

# edge case: if self.donation_shares = 0, then self._donation_shares() is 0

# and new_elapsed = 0, thus initializing last_donation_release_ts = block.timestamp

new_elapsed: uint256 = self._donation_shares(False) * self.donation_duration // new_donation_shares

# Additional observations:

# new_elapsed = (old_pool * old_elapsed / D) * D / new_pool = old_elapsed * (old_pool / new_pool)

# => new_elapsed is always smaller than old_elapsed

# and self.last_donation_release_ts is carried forward propotionally to new donation size.

self.last_donation_release_ts = block.timestamp - new_elapsed

# Credit donation: we don't explicitly mint lp tokens, but increase total supply

self.donation_shares = new_donation_shares

self.totalSupply += d_token

log Donation(donor=msg.sender, token_amounts=amounts_received)

Note that we don't mint these LP tokens to anyone. We simply account for them as part of the total_supply of LP tokens.

Now, what if someone adds liquidity while donation shares exist in the pool? They might want to claim part of the "free cake." That's where the protection_factor comes in.

self.donation_protection_lp_threshold - if we're adding more LPs (in ratio) than this threshold, we increase the value of the protection_period. Initially set to 20%, this can be changed by the admin through set_donation_protection_params(). We'll call it .

d_token here is the amount of LP tokens from the caller.

So, for every new addition:

The part of the protection calculation ensures that spamming deposits cannot prolong the extension indefinitely.

else:

# --- Donation Protection & LP Spam Penalty ---

# Extend protection to shield against donation extraction via sandwich attacks.

# A penalty is applied for extending the protection to disincentivize spamming.

relative_lp_add: uint256 = d_token * PRECISION // (token_supply + d_token)

if relative_lp_add > 0 and self.donation_shares > 0: # sub-precision additions are expensive to stack

# Extend protection period

protection_period: uint256 = self.donation_protection_period

extension_seconds: uint256 = min(relative_lp_add * protection_period // self.donation_protection_lp_threshold, protection_period)

current_expiry: uint256 = max(self.donation_protection_expiry_ts, block.timestamp)

new_expiry: uint256 = min(current_expiry + extension_seconds, block.timestamp + protection_period)

self.donation_protection_expiry_ts = new_expiry

# Regular liquidity addition

self.mint(receiver, d_token)

price_scale = self.tweak_price(A_gamma, xp, D)

_calc_token_fee()

_calc_token_fee() is called in depositing and withdrawing functions (except remove_liquidity()) to calculate the fee. With donations, two new parameters were added: deposit: bool = False and donation: bool = False.

def _calc_token_fee(amounts: uint256[N_COINS],

xp: uint256[N_COINS],

donation: bool = False,

deposit: bool = False,

from_view: bool = False) -> uint256:

If this transaction is a donation, no fee is taken except a small NOISE_FEE of 0.1 BPS (10**5 in code, where BASE is 10**10).

if donation:

# Donation fees are 0, but NOISE_FEE is required for numerical stability

return NOISE_FEE

If someone adds liquidity while there is at least 1 donation share in the pool, the pool extends protection. Also, if there is already a protection for donation shares, then the protocol takes an "ape tax" for each liquidity add. We'll call it .

This fee applies only during deposits when protection has not expired. The fee is proportional to the protection factor. Note that we apply this before adjusting self.donation_protection_expiry_ts.

uses the same formula as in _donation_shares() section.

here is calculated from the formula in “Dynamic fees” section.

if deposit:

# Penalty fee for spamming add_liquidity into the pool

current_expiry: uint256 = self.donation_protection_expiry_ts

if current_expiry > block.timestamp:

# The penalty is proportional to the remaining protection time and the current pool fee.

protection_factor: uint256 = min((current_expiry - block.timestamp) * PRECISION // self.donation_protection_period, PRECISION)

lp_spam_penalty_fee = protection_factor * fee // PRECISION

return fee * Sdiff // S + NOISE_FEE + lp_spam_penalty_fee

This fee prevents attempts to extract value from donation releases.

_withdraw_leftover_donations()

_withdraw_leftover_donations() is called after removing liquidity. If no LP tokens remain except donations, all balances are withdrawn to the fee_receiver or the admin.

if self.donation_shares != self.totalSupply:

return

# Pool has no other LP than donation shares, must be emptied

receiver: address = staticcall factory.fee_receiver()

if receiver == empty(address):

receiver = staticcall factory.admin()

# empty the pool

withdraw_amounts: uint256[N_COINS] = self.balances

for i: uint256 in range(N_COINS):

# updates self.balances here

self._transfer_out(i, withdraw_amounts[i], receiver)

The function also clears the state and logs that no liquidity remains.

# Update state

self.donation_shares = 0

self.totalSupply = 0

self.D = 0

self.donation_protection_expiry_ts = 0

log RemoveLiquidity(provider=receiver, token_amounts=withdraw_amounts, token_supply=0)

For an alternative explanation of how donations work please look at donation release derivation and donation lock derivation sections in the Appendix.

Repegging with donations

tweak_price()

Now that we've covered the necessary definitions and described donation mechanism, let's explore the code of the tweak_price() function in detail.

This function updates all the price types mentioned above. It's called after every swap, liquidity addition, and imbalanced liquidity removal. Since these operations change the pool's balances, the function is called with newly calculated and values (computed using the Newton method right before the call). Even during a swap, changes because the fee is applied.

At the start, the function recalculates price_oracle and last_price. This happens on every call, ensuring the EMA moves forward and the spot price updates after each swap.

if last_timestamp < block.timestamp:

...

price_oracle = unsafe_div(

min(last_prices, 2 * price_scale) * (10**18 - alpha) +

price_oracle * alpha, # ^-------- Cap spot price into EMA.

10**18

)

self.cached_price_oracle = price_oracle

self.last_timestamp = block.timestamp

...

# Here we update the spot price, please notice that this value is unsafe

# and can be manipulated.

self.last_prices = unsafe_div(

staticcall self.MATH.get_p(_xp, D, A_gamma) * price_scale,

10**18

)

The EMA updates only after the first swap or other operation in a block, using the last price from the previous block (since last_price is updated independently, the previous block's last_price is accounted for). Additionally, the EMA calculation caps the last price at twice the peg price.

donation_shares: uint256 = self._donation_shares()

locked_supply: uint256 = total_supply - donation_shares

locked_supply is LP shares + locked donation shares.

Note that donations here is .

The next step is to recalculate xcp , virtual_price, xcp_profit.

Recompute xcp from the current D and price_scale, set virtual_price = xcp / total_supply

Note that there is a check listed below. So xcp_profit can decrease at some point.

if virtual_price < old_virtual_price:

# If A and gamma are being ramped, we allow the virtual price to decrease,

# as changing the shape of the bonding curve causes losses in the pool.

assert is_ramping, "virtual price decreased"

Next, we check if we have enough profit to trigger a repeg. For the virtual price (VP), we use vp_boosted- the virtual price calculated without donation shares. We define the threshold as threshold_vp = (xcp_profit + 1) / 2.

To simplify, all equations can be written as . Here, is represented as allowed_extra_profit (in the code below - rebalancing_params[0]) and prevents excessive repegs. Additionally, a repeg can only happen once per block.

xcp_profit: uint256 = self.xcp_profit + virtual_price - old_virtual_price

self.xcp_profit = xcp_profit

# ------------ Rebalance liquidity if there's enough profits to adjust it:

#

# Mathematical basis for rebalancing condition:

# 1. xcp_profit grows after virtual price, total growth since launch = (xcp_profit − 1)

# 2. We reserve half of the growth for LPs and admin, rest is used to rebalance the pool

# Rebalancing condition transformation:

# virtual_price - 1 > (xcp_profit - 1)/2 + allowed_extra_profit

# virtual_price > 1 + (xcp_profit - 1)/2 + allowed_extra_profit

threshold_vp: uint256 = max(10**18, (xcp_profit + 10**18) // 2)

# The allowed_extra_profit parameter prevents reverting gas-wasting rebalances

# by ensuring sufficient profit margin

# user_supply < total_supply => vp_boosted > virtual_price

# by not accounting for donation shares, virtual_price is boosted leading to rebalance trigger

# this is approximate condition that preliminary indicates readiness for rebalancing

vp_boosted: uint256 = 10**18 * xcp // locked_supply

assert vp_boosted >= virtual_price, "negative donation"

if (vp_boosted > threshold_vp + rebalancing_params[0]) and (last_timestamp < block.timestamp):

After that, we determine whether we actually want to repeg or if the current peg prices are acceptable.

norm: uint256 = unsafe_div(

unsafe_mul(price_oracle, 10**18), price_scale

)

if norm > 10**18:

norm = unsafe_sub(norm, 10**18)

else:

norm = unsafe_sub(10**18, norm)

adjustment_step: uint256 = max(

rebalancing_params[1], unsafe_div(norm, 5)

) # ^------------------------------------- adjustment_step.

# We only adjust prices if the vector distance between price_oracle

# and price_scale is large enough. This check ensures that no rebalancing

# occurs if the distance is low i.e. the pool prices are pegged to the

# oracle prices.

if norm > adjustment_step:

# Calculate new price scale.

p_new: uint256 = unsafe_div(

price_scale * unsafe_sub(norm, adjustment_step) +

adjustment_step * price_oracle,

norm

) # <---- norm is non-zero and gt adjustment_step; unsafe = safe.

Translating from the code: we check that the ratio (ratio ≥ 1) of the current peg price and the oracle price is big enough that it surpasses the adjustment_step (const variable from config).

We want to take bigger steps when the difference between prices is significant, so we use the maximum of adj_step and ratio/5. For example, if the difference between prices is 20%, we want to step by at least 4%, ensuring the adjustment is meaningful.

New peg price is a weighted average between the peg price and the oracle price:

If all requirements are met, we could recalculate all essential variables: xp, new_D, new_xcp, new_virtual_price. Basically, we need to recalculate xp because it depends on the peg price(especially for the second token), and all other variables depends on xp.

Note that new_virtual_price is calculated based on the whole totalSupply (without removing donation shares).

After that, the check for the new_virtual_vp is applied. However, if we don’t meet the conditions for the goal_vp, we would need to calculate how much we have to burn. Since the VP value depends on the total supply amount, we can adjust it by burning some shares.

We calculate the required total supply and subtract the difference between the current total supply and the required supply from the available donation shares.

donation_shares_to_burn: uint256 = 0

# burn donations to get to old vp, but not below threshold_vp

goal_vp: uint256 = max(threshold_vp, virtual_price)

if new_virtual_price < goal_vp:

# new_virtual_price is lower than virtual_price.

# We attempt to boost virtual_price by burning some donation shares

# This will result in more frequent rebalances.

#

# vp(0) = xcp / total_supply # no burn -> lowest vp

# vp(B) = xcp / (total_supply – B) # burn B -> higher vp

#

# Goal: find the *smallest* B such that

# vp(B) -> virtual_price (pre-rebalance value)

# B <= donation_shares

# what would be total supply with (old) virtual_price and new_xcp

tweaked_supply: uint256 = 10**18 * new_xcp // goal_vp

assert tweaked_supply < total_supply, "tweaked supply must shrink"

donation_shares_to_burn = min(

unsafe_sub(total_supply, tweaked_supply), # burn the difference between supplies

donation_shares # but not more than we can burn (lp shares donation)

)

# update virtual price with the tweaked total supply

new_virtual_price = 10**18 * new_xcp // (total_supply - donation_shares_to_burn)

# we thus burn some donation shares to compensate for virtual price drop

Note that we will recalculate new_virtual_price, because the previous one was calculated using the old total supply of LP.

if (

new_virtual_price > 10**18 and

new_virtual_price >= threshold_vp

# only rebalance when pool preserves half of the profits

):

self.D = new_D

self.virtual_price = new_virtual_price

self.cached_price_scale = p_new

Only if new_virtual_price is more than threshold_vp we could say that repeg is successful, and we can store new variables in storage. new_virtual_price > 10**18 is more about a sanity check.

After making all changes, we need to calculate the new state of shares. Since we want to burn only available shares (time-released and not under protection), we must ensure that a new call to _donation_shares() returns old _donation_shares() - donation_shares_to_burn.

We'll call donation_shares_to_burn as .

In the code, shares_unlocked represents , and shares_available represents . We won't replicate the full derivation, but we'll highlight the key ideas. For details, see “Repeg” section.

Since applies to both new and old variables, simply subtracting from both won't work.

if donation_shares_to_burn > 0:

# Invariant to hold immediately after the burn (measured after protection):

# _donation_shares()' = _donation_shares() - donation_shares_to_burn

# We shoud carry forward self.last_donation_release_ts to satisfy the invariant

# Get pre-burn state:

shares_unlocked: uint256 = self._donation_shares(False) # time‑unlocked, ignores protection

shares_available: uint256 = donation_shares # available after protection (computed above self._donation_shares(True))

# Invariant: shares_available_new = shares_available - donation_shares_to_burn

# Definition: shares_available = shares_unlocked * (1 - protection) [Note: (1 - protection) = shares_available / shares_unlocked]

# To reduce shares_available_new by donation_shares_to_burn (B), we should reduce the shares_unlocked_new proportionally:

# shares_available_new = shares_available - B = shares_unlocked * (1 - protection) - B = (shares_unlocked - B/(1 - protection)) * (1 - protection)

# => shares_unlocked_new = shares_unlocked - B/(1 - protection) = shares_unlocked - B * shares_unlocked / shares_available

shares_unlocked_new: uint256 = shares_unlocked - donation_shares_to_burn * shares_unlocked // shares_available

Once we change , we must recalculate .

# Definition: shares_unlocked_new = new_total * new_elapsed // donation_duration

# => new_elapsed = shares_unlocked_new * donation_duration // new_total

new_total: uint256 = self.donation_shares - donation_shares_to_burn

new_elapsed: uint256 = 0

if new_total > 0 and shares_unlocked_new > 0:

new_elapsed = (shares_unlocked_new * self.donation_duration) // new_total

# Apply the burn: update the state and shift the release timestamp

self.donation_shares = new_total

self.totalSupply -= donation_shares_to_burn

self.last_donation_release_ts = block.timestamp - new_elapsed

If any of the virtual price checks fail (except the first one), we simply return and save the new .

This is necessary because was modified in the previous function, and depends on this updated value.

self.D = D

self.virtual_price = virtual_price

return price_scale

Conclusion

Curve's donation mechanism transforms how CryptoPool manages the fundamental tension between capital efficiency and peg stability. Prior to donations, pools could only repeg using accumulated fees, creating a direct conflict between LP returns and oracle tracking quality. The larger the repeg, the more fees consumed, the less profit for LPs. Donations resolve this by introducing a third source of funds that exists specifically for rebalancing operations.

The elegance is in how donations integrate with existing pool mechanics. Rather than creating a separate donation contract or complex claim system, donated LP shares simply inflate the total supply and unlock linearly over the donation duration (default seven days). The protection layer prevents extraction attacks by linking donation availability to genuine pool activity. The system penalizes rapid liquidity cycling through the spam fee, making sandwich attacks on unlocking donations economically irrational.

Useful links

- https://dune.com/hagaetc/dex-metrics

- https://github.com/curvefi/twocrypto-ng/compare/2b78439611b2d230cbfb1666a8b225ad2a6acf82..387fbe5f12473ef0e1f0c6a76bc38f1ca0da669d

- https://github.com/curvefi/cryptopool-simulator/tree/main

- https://docs.curve.finance/cryptoswap-exchange/twocrypto-ng/implementations/refuel/

Appendix

xcp_profit explanation

The original paper proposes another formula they are following the same rule. Note that for every system starts from 1.0.

Now, let’s transform the multiplicative into a simpler form without repeg.

Additive transformation

Since at initialization, both additive and multiplicative approaches equal .

After a repeg, the additive approach grows slower. This is the key property: we don't want to overestimate the fees collected by LPs, or they will be used for repegging too aggressively, resulting in LPs receiving less than profit. Proof:

Donation release derivation

Unlocked amount calculation

We want every new donation to release linearly, keeping all available shares intact. As everything related to this mechanism is kept in two variables to save gas, for all new donations, we recalculate like we had one large donation in total.

intuitive, must be lower than as increases to keep the same.

Repeg

During a repeg, we burn some donation_shares. Simply deducting the burned shares from won't correctly reflect , so we must adjust the unlocked amounts to preserve the following invariant:

- time-unlocked donation shares, ignoring the protection

By definition:

(4) into (3):

Divide (5) by

And just like in the new donation adjustment:

Donation lock derivation

If the donation_protection_expiry_ts timestamp has not expired, we calculate the protection damping factor.

For every non-donation deposit we must extend current protection (create a new one).

The donation_protection_lp_threshold limits how long the extension can be.

The part of the protection calculation ensures that spamming deposits do not prolong extension infinitely.

If someone deposits while protection is still active, then the pool takes an additional spam penalty fee.